Maximum Likelihood Estimation

A little disclaimer:

I started learning ML and currently reading Ian Goodfellow's "Deep learning" and these are my first attempts to use my Statistics and Probabilities Theory knowledge, so some concepts are not directly clear for me.

Everything was more or less clear prior to Maximum Likelihood Estimation.

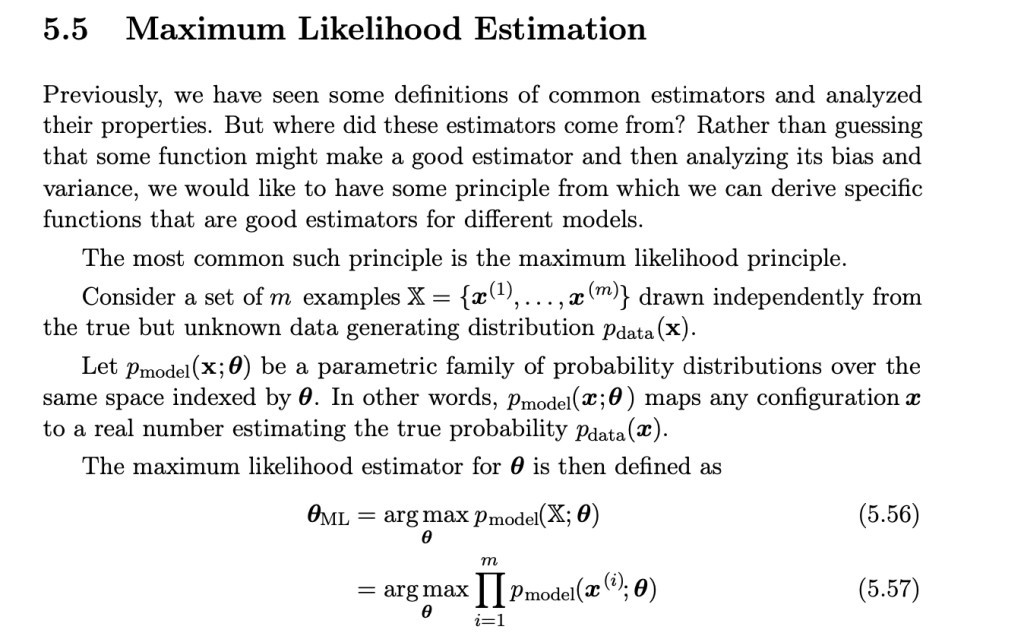

Below the author is trying to explain how it works.

"Consider a set of m examples ... drawn independently from the true but unknown data generating distribution pdata(x)"

What is meant here? That x is some random variable where pdata is some unknown probability density function?

Then "Let pmodel(x;θ) be a parametric family of probability distributions over the same space indexed by θ"

So "parametric family of probability distributions" means any PDF with some parameters, right? For example Normal with it's mean and variance? But what does "over the same space indexed by θ" mean? What is θ in current context? Is it a parameter(or set of parameters) of PDF?

Answer

- The questioner was satisfied with and accepted the answer, or

- The answer was evaluated as being 100% correct by the judge.

Kav10

Kav10

-

@Kav10 so pdata is some random value from pmodel(x;θ)?

-

No, pdata is not a random value. It is the true but unknown probability distribution that generated the actual data points in your dataset. It's not a single random value; rather, it's a function that describes how likely each possible value x is to occur in the real data distribution.

-

pmodel is a function within a parametric family of probability distributions. It's a model you create to approximate the true data distribution pdata (x).

-

So, when you perform MLE, you're trying to find the values of θ that make the model's probability distribution pmodel (x;θ) align as closely as possible with the true data distribution pdata (x). It's not about treating pdata (x) as a random value, but about matching the characteristics of the model's distribution to the characteristics of real data distribution.

-

What do you mean by "make the model's probability distribution pmodel (x;θ) align as closely as possible with the true data distribution pdata (x)"? pmodel is a family, but pdata is a single pd.

-

-

@Kav10 I mean that pdata is some pdf from pmodel?

-

No, they're not the same thing. See above comments.

-

didn't say that they are the same, I said that pdata is one particular case from pmodel family with some particular parameters, isn't it?

-

pdata (x) is not a specific case of pmodel (x;θ), but rather the actual, true distribution that generated the observed data. It is not a member of the parametric family.

-

pdata (x) is the distribution that generated your real-world data, pmodel (x;θ) is a distribution you've chosen (from a family of distributions) in an attempt to approximate pdata (x). The goal of MLE is to find the θ that makes pmodel (x;θ) most closely resemble pdata (x), so making your model's generated data as similar as possible to the real data.

-

- answered

- 2168 views

- $10.00

Related Questions

- Please check if my answers are correct - statistic, probability

- foundations in probability

- Five married couples are seated around a table at random. Let $X$ be the number of wives who sit next to their husbands. What is the expectation of $X$ $(E[X])$?

- Markov Chain Question

- Probability question

- How safe is this driver?

- please use statistics to explain spooky phenomenon

- ARIMA model output